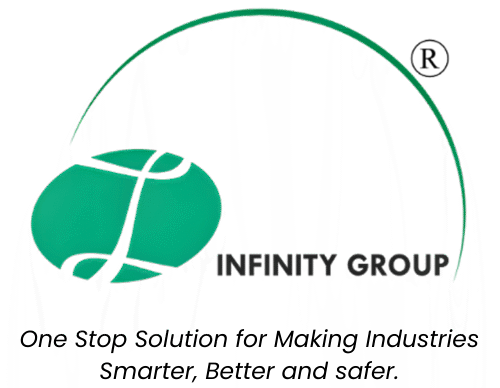

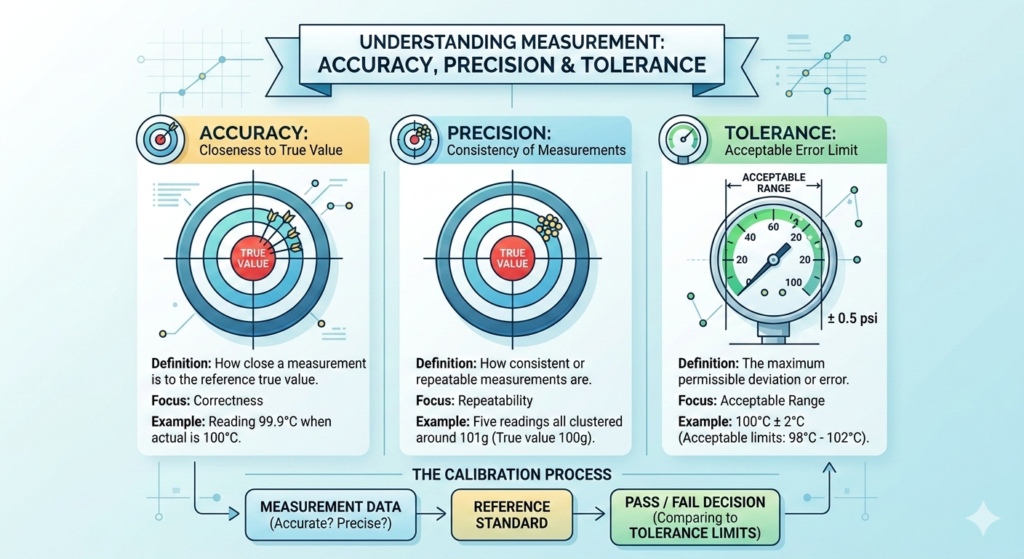

In calibration and measurement science, accuracy, precision, and tolerance are three different but related concepts used to evaluate the performance of measuring instruments.

1. Accuracy

Accuracy refers to how close a measured value is to the true or accepted reference value.

Key points

- Indicates correctness of measurement.

- Determined by comparing the instrument reading with a standard reference during calibration.

- High accuracy means very small measurement error.

Example

Actual temperature = 100°C

Instrument reading = 100.1°C

Since the reading is very close to the true value, the instrument is highly accurate.

2. Precision

Precision refers to how consistent or repeatable measurements are when taken multiple times under the same conditions.

Key points

- Focuses on repeatability of measurements.

- An instrument can be precise even if it is not accurate.

- Related to measurement characteristics like:

- Repeatability

- Stability

- Linearity

- Hysteresis

Example

Five readings of a 100 g weight:

101 g

101 g

101 g

101 g

101 g

These readings are very consistent, so the instrument is precise, but not accurate because the true value is 100 g.

3. Tolerance

Tolerance is the maximum permissible deviation or acceptable error limit for a measurement or instrument.

Key points

- Defined by standards, manufacturer specifications, or process requirements.

- Determines pass or fail during calibration verification.

- Expressed as ± value or limits.

Example

Pressure gauge specification:

20 psi ± 0.5 psi

- Upper limit = 20.5 psi

- Lower limit = 19.5 psi

Any measurement within this range is within tolerance.

Comparison Table

| Parameter | Definition | Focus | Example |

|---|---|---|---|

| Accuracy | Closeness to the true value | Correctness | Reading 99.9°C when actual is 100°C |

| Precision | Consistency of repeated measurements | Repeatability | Five readings all around 101°C |

| Tolerance | Allowed deviation limit | Acceptable range | 100°C ± 2°C |

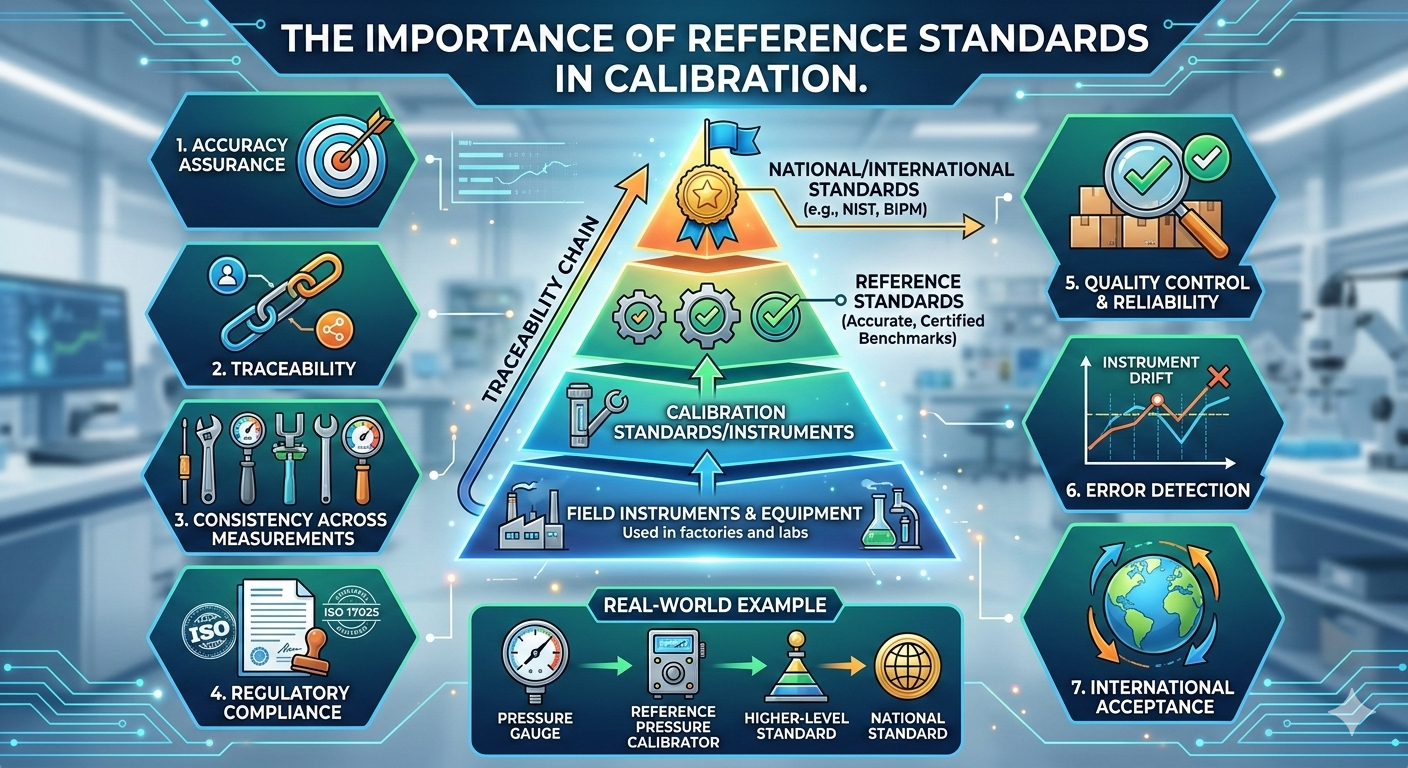

Relationship in Calibration

During instrument calibration:

- The instrument reading is compared with a reference standard.

- Accuracy is evaluated by calculating measurement error.

- Precision is assessed by repeating measurements.

- The results are compared with the specified tolerance to decide Pass or Fail.

✔ Simple way to remember

- Accuracy → How close to the true value

- Precision → How consistent the readings are

- Tolerance → How much error is allowed